On January 26, 2026, Microsoft announced the global launch of Maia 200, a next-generation AI accelerator specifically designed to optimize inference workloads. The new chip aims to address the computational costs and demands of Large Language Models (LLMs), promising a cost-performance ratio superior to any other hardware in the current Azure fleet.

Scott Guthrie, Microsoft's executive vice president of Cloud + AI, celebrated the launch as a milestone in the company's strategy. "The Maia 200 is a powerhouse in AI inference and the most efficient system we've ever deployed," the executive stated. According to Guthrie, the chip is fundamental to Microsoft's "heterogeneous AI infrastructure," serving as the foundation for everything from Microsoft 365 Copilot to OpenAI's advanced GPT-5.2 models.

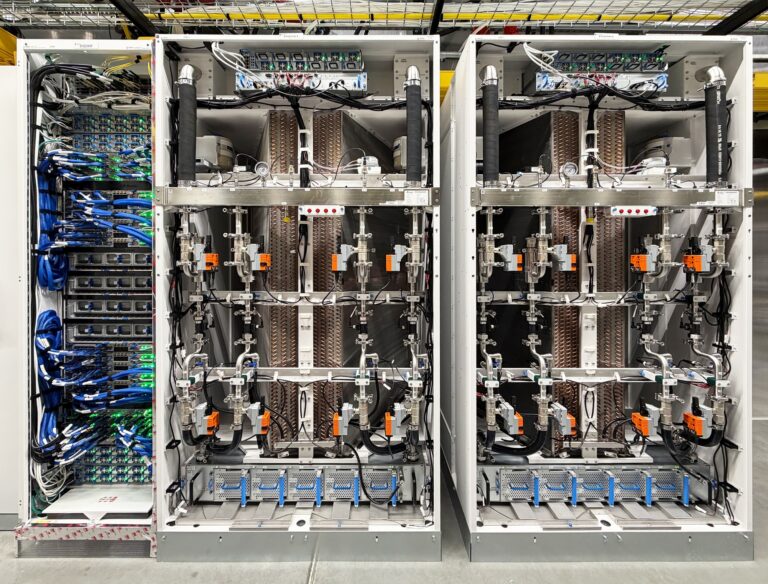

Manufactured using TSMC's 3-nanometer process and containing over 100 billion transistors, the Maia 200 is considered an impressive piece of engineering. It delivers over 10 petaFLOPS in 4-bit precision (FP4), tripling the performance of the third-generation Amazon Trainium. To sustain this power, the chip features 216 GB of HBM3e memory and a bandwidth of 7 TB/s, eliminating data bottlenecks.

The hardware is already being deployed in data centers in the US and is accompanied by the Maia SDK, facilitating adoption by developers. “The Maia 200 integrates seamlessly with Azure, and we are previewing the Maia SDK with a complete set of tools for creating and optimizing models for the Maia 200. It includes a full suite of features, including integration with PyTorch, a Triton compiler and optimized kernel library, as well as access to Maia's low-level programming language. This gives developers precise control when needed, while also allowing for easy portability of models between heterogeneous hardware accelerators. As we finalize the deployment, we are already designing future generations to set new standards,” concluded Guthrie.