CLM and Skyhigh link measures to safeguard exposure of critical information in generative AI applications

When using the ChatGPT users can inadvertently pass on confidential data posing a serious threat to companies' physical and cybersecurity.

CLM, a Latin American value-added distributor focused on information security, data protection, cloud and data center infrastructure, list the main points of attention when using these tools. And, together with Skyhigh, a global leader in cybersecurity, it describes best practices and technologies, like CASB[i] and DLP[ii], so that critical information is not exposed.

As is known, Generative Artificial Intelligence apps such as ChatGPT, in fact, improve business productivity, facilitate numerous tasks, improve services and simplify operations. Its features generate content, translate texts, process large amounts of data, quickly create financial plans, debug and write program codes, etc.

However, Bruno Okubo, Skyhigh Product Manager at CLM, asks: does taking advantage of the numerous facilities of these applications compensate for the significant risks of exposing confidential data, whether of companies or individuals? “Even with the innovations and progress that OpenIA incorporated into ChatGPT, with the new GPT-4 algorithm, the new version suffers from the same problems that plagued users of the previous version, especially regarding information security”, warns Okubo.

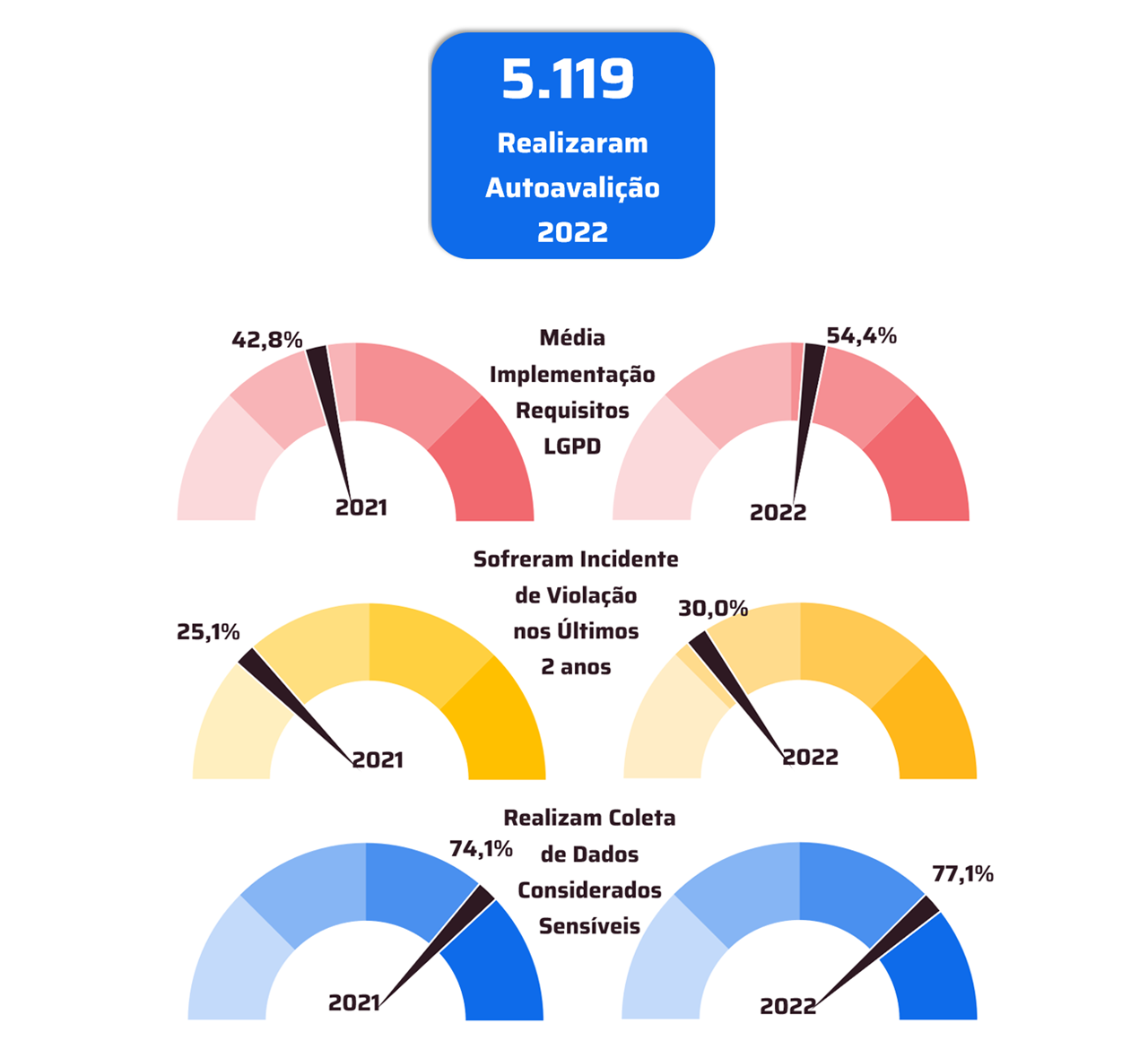

In order not to further increase the risk of breaching corporate data and consequently non-compliance with the LGPD, for example, the CLM lists some examples of how sensitive data can be exposed in ChatGPT and in other cloud-based AI applications:

• Text with PII (personally identifiable information) can be copied and pasted into ChatGPT to generate email ideas, responses to customer queries, personalized letters, sentiment analysis verification

• Health information typed into the chatbot to come up with individualized treatment plans, including medical images such as CT scan and MRI, can be processed and improved thanks to AI

• Proprietary source code can be uploaded by software developers for debugging, code completion, readability and performance improvements

• Files containing company secrets such as draft earnings reports, mergers and acquisitions (M&A) documents, pre-launch announcements, and other sensitive data can be uploaded for spelling and writing verification

- Financial information such as corporate transactions, undisclosed operating income, credit card numbers, customer credit ratings, statements and payment histories can be included in ChatGPT to do financial planning, document processing, customer onboarding, data synthesis , compliance, fraud detection, etc.

Fact is, once you enter this information, the risk of a data breach is significant. “Companies need to take preventive measures very seriously to protect their sensitive data, especially when considering modern cloud-enabled tools,” explains the expert.

CLM and Skyhigh have developed a list of measures to prevent leaks.

• Monitor usage and overages in the use of applications and limit the exposure of confidential information through them, in addition to protecting these documents from accidental loss, leaks and theft

- It's essentialensure visibilityof everything that happens on the corporate network, with the adoption of automated tools that continuously monitor which applications (such as ChatGPT) corporate users try to access, how, when, from where, how often, etc.

“The only way for an organization to have the broadest visibility into its network and introduce measures to control usage and access to these thousands of new applications is to adopt security technologies that automatically protect sensitive data, such as CASB – Cloud Access Security Broker ,” points out Okubo.

• It's essential understand the different levels of risk that each application represents for the organization and be able to granularly define access control policies in real time based on categorizations and security conditions that may change over time

- While the most explicitly malicious applications should be blocked, when it comes to access control, often theResponsibility for using apps like ChatGPT should be assigned to users. The company then tolerates and does not necessarily stop activities that may make sense for a subset of business groups or most of them.

-

Adopt access control policies that include real-time training workflows that trigger every time users open ChatGPT, such as customizable warning pop-ups that offer guidance on responsible use of the app, the potential risk associated with it, and a request for acknowledgment or justification

• Manage unintentional or unapproved movement of sensitive data between cloud application instances and in the context of risk, both application and user

• Although ChatGPT access can be granted, it is critical limit uploading and posting of highly sensitive data via ChatGPT and other potentially risky data exposure vectors in the cloud

Skyhigh explains that limiting uploads and posts of sensitive data via ChatGPT can only be done using modern data loss prevention (DLP) techniques. powered by ML and AI models, and capable of automatically identifying sensitive data streams and categorizing posts with the highest level of accuracy

- Make employees aware of applications and activities considered risky.This can be done primarily through real-time alerts and automated training workflows, involving the user in access decisions after risk recognition.

“Human beings make mistakes. Thus, users can put sensitive data at risk, often without realizing it. The accuracy of DLP tools, delivered in the cloud, ensure that the system only monitors and prevents uploads of confidential data (including files and copied and pasted texts that remain in the clipboard) in applications such as ChatGPT, without interrupting harmless queries and safe tasks”, warns Okubo.

It is worth mentioning that the selective interruption of uploads and postings of confidential information in ChatGPT, with the inclusion of automated and real-time visual training messages are ways to educate employees about violations, inform about corporate security policies and, ultimately, mitigate risky behavior.

- [i] Cloud Access Security Broker (CASB) protects data and stops cloud threats across SaaS, PaaS and IaaS from a single, cloud-native application point. It is a software tool or service that sits between an organization's on-premises infrastructure and a cloud provider's infrastructure, acting as a gatekeeper, allowing the company to extend the reach of its security policies beyond its own infrastructure.

- [ii] DLP (Data Loss Prevention), or data loss prevention, prevents data loss by detecting potential breaches through filtering and monitoring. The solution blocks sensitive data traffic while in use, in motion and at rest.